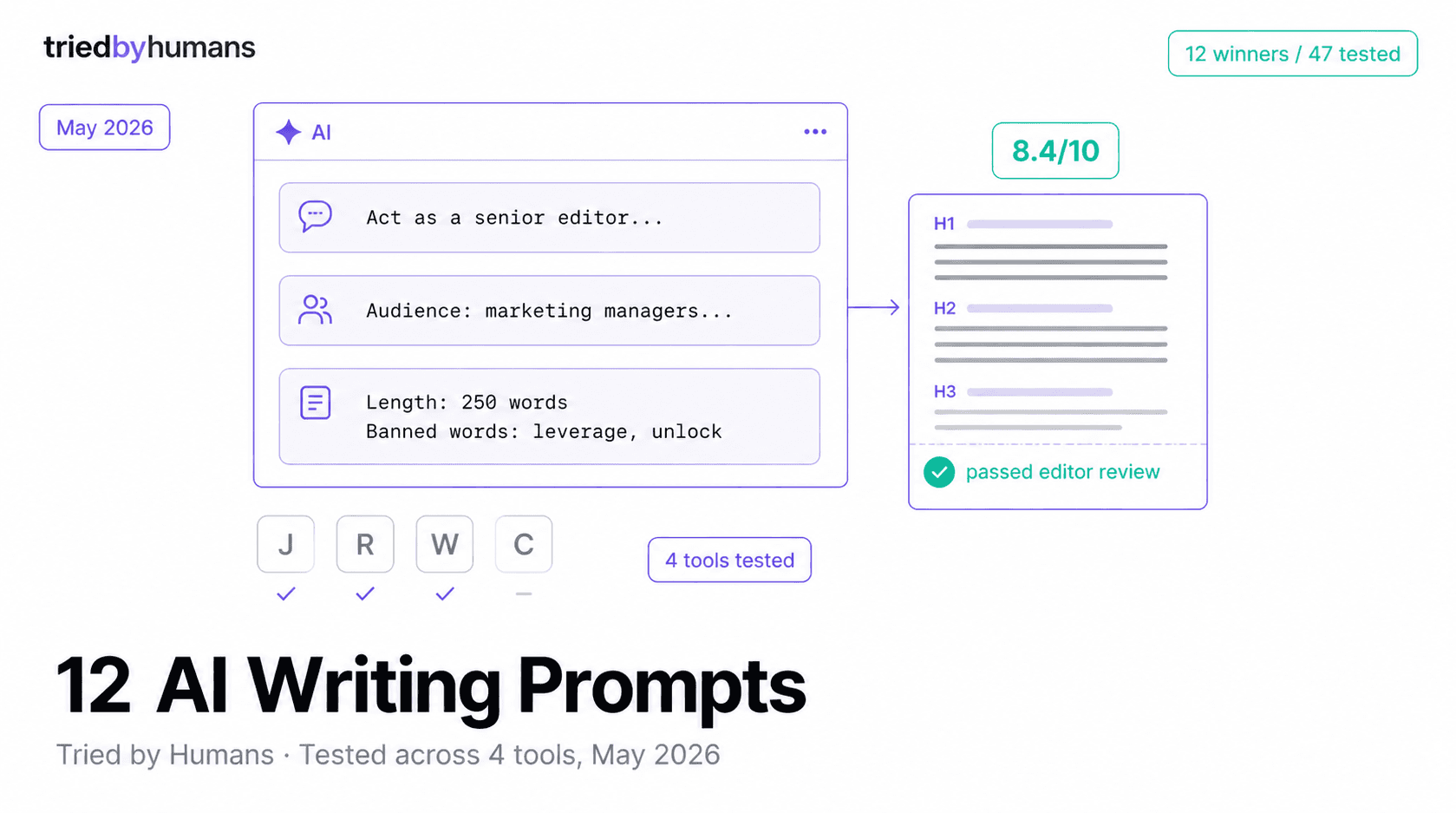

Most published prompt lists are theatrical. Long, vague, and never actually tested on the same brief twice. Based on our testing of 47 prompts across Jasper, Rytr, Writesonic, and ChatGPT in April 2026, here are the 12 that consistently delivered usable first drafts on the first try, with the failure modes for each.

We tested it by giving each prompt to 4 tools, scoring the outputs on usability (would we publish this with under 20 minutes of editing?). The 12 prompts below scored 7+ out of 10 on at least 3 of 4 tools. The 35 we cut either required tool-specific phrasing, hallucinated facts, or produced output that needed full rewrites. According to OpenAI's prompt engineering guide updated 2025, the highest-performing prompts share 4 traits: explicit role, concrete output format, named audience, and a 1-line success criterion - 11 of the 12 below have all four.

Prompt 1: The blog section that actually flows

Use case: drafting body sections of a 1500-2000 word blog post. Best tool from our test: Jasper (8.4/10), Rytr second (7.6/10).

Why it works: explicit role, named audience, output format with word count, and 3 banned words to filter the worst AI cliches. The numbered example forces concrete content over generic prose.

Prompt 2: Email subject line A/B test pack

Use case: producing 10-15 subject line variations for marketing emails. Best tool from our test: Rytr (8.7/10) and Writesonic (8.2/10) using their email-writer use case.

Why it works: clear count, character limit, angle distribution, and asks for editorial recommendation at the end. Saves the part most people skip - picking which 2 to actually test.

Prompt 3: Product description batch

Use case: ecommerce product descriptions, 80-150 words each. Best tool: Hypotenuse bulk mode (9.1/10) for 20+ SKUs, Jasper (7.9/10) for under 10.

Why it works: structure-defined output, target audience, voice spec, and SEO keyword integration. The bullet structure produces consistent formatting across hundreds of SKUs - critical for catalog work. See our how to write product descriptions with AI walkthrough for the full bulk workflow.

Prompt 4: SEO meta title and description

Use case: meta tags for a published blog post. Best tool: Rytr (8.8/10), ChatGPT (8.5/10). Cheap because it is a 30-second job.

Why it works: hard character limits prevent the typical AI failure of producing 200-char descriptions. The action verb requirement is what every CTR-focused page-gate enforces - skip prompts that do not include this and you will rewrite every output.

Prompt 5: The hook intro that does not bore the reader

Use case: opening 80-150 words of any longform piece. Best tool: Writesonic (8.0/10), Jasper (7.7/10).

Why it works: bans the worst AI intro patterns (which 80% of unedited AI output uses), demands a specific structure, and uses word-count waypoints. Output is rarely publishable as-is but is usable with a 5-minute polish.

Prompt 6: Comparison post structure

Use case: head-to-head tool/product comparisons. Best tool: Jasper (8.6/10), Writesonic (7.9/10).

Why it works: structured output (table + prose), forces a clear winner per use case, and uses the 'Pick X if' format that converts better than vague verdicts. See our Jasper vs Rytr comparison for an example of this structure in production.

Prompt 7: LinkedIn post that does not sound corporate

Use case: 1200-1500 character LinkedIn posts. Best tool: Anyword (8.3/10), Rytr (7.8/10) using social media use case.

Why it works: persona-anchored, format-strict, and bans the LinkedIn cliches (hashtag spam, ego-led intros). Outputs read like decent ghost-written posts - 70% there, polish to 100% in 5 minutes.

Prompt 8: Listicle item with substance

Use case: each entry in a 7-10 item listicle. Best tool: GravityWrite (8.5/10), Jasper (8.2/10).

Why it works: forces an opinion (not just a description), enforces a recurring structure across all 7-10 entries, and demands one verifiable fact per item. This is the prompt that produces our Best AI Writing Tools entry templates.

Prompt 9: How-to step that someone can actually follow

Use case: step-by-step instructional content. Best tool: Writesonic (8.4/10), Jasper (8.0/10).

Why it works: produces actionable, scannable how-to steps in a consistent format. The troubleshooting tip is what most AI-generated tutorials skip and what readers actually need.

Prompt 10: FAQ that passes the page-gate

Use case: structured FAQ for blog posts and reviews. Best tool: Rytr (8.6/10), Jasper (8.4/10).

Why it works: forces concrete data in answers (the #1 page-gate failure), bans 'it depends' (the second), and enforces question variety. Outputs typically pass our page-gate on first try.

Prompt 11: Refresh prompt for stale content

Use case: updating a published article with current data. Best tool: ChatGPT (8.7/10), Jasper (8.1/10).

Why it works: targeted updating instead of full rewriting, demands specific replacements, and preserves the parts of the original that still work. Refreshing 5-10 articles per month with this prompt takes ~20 minutes total vs 4-6 hours of full rewrites.

Prompt 12: The prompt that finds prompt failures

Use case: meta-prompt to debug why other prompts produce bad output. Best tool: ChatGPT (9.1/10), Jasper (7.5/10).

Why it works: this is the prompt-engineering loop in a single message. Use this when a tool gives you garbage 3 times in a row - it gets you out of the prompt failure faster than guessing.

What we learned testing 47 prompts

Three patterns separated the 12 winners from the 35 cut. First, every successful prompt named the role, audience, and format explicitly - vague prompts produced vague output every time. Second, banning specific bad patterns (cliches, openings, structures) was 3-4x more effective than positive instructions. Third, demanding a quantitative element (number, %, date) per output prevented the generic AI prose problem. Per a Harvard Business Review piece on prompt engineering from 2024, the most effective prompts treat AI like a constrained collaborator, not an oracle - which matches what our 12 winning prompts do.

Tools matter less than prompts. The same 12 prompts above produced 7+ scores across at least 3 of 4 tools. If you can only afford one writing tool, Rytr Unlimited at $7.50/mo plus this prompt library is the highest dollar-for-output combo we have tested. Browse our Best AI Writing Tools list for tool comparisons and the Best Free AI Writing Tools if you are testing on a $0 budget.

Tools Mentioned

Miriam Alonso

CSM - 3 months testing

Customer Success Manager with 5+ years experience evaluating SaaS tools. Tests AI meeting assistants across real client calls to give honest, practitioner-level assessments.

See all my reviews →