The short answer: Google does not directly penalize AI-written content. The longer answer: low-quality content gets penalized, and AI-written content tends to be low-quality unless you do specific things to fix it. Based on our testing of 12 articles published over 90 days, we tracked exactly what happens.

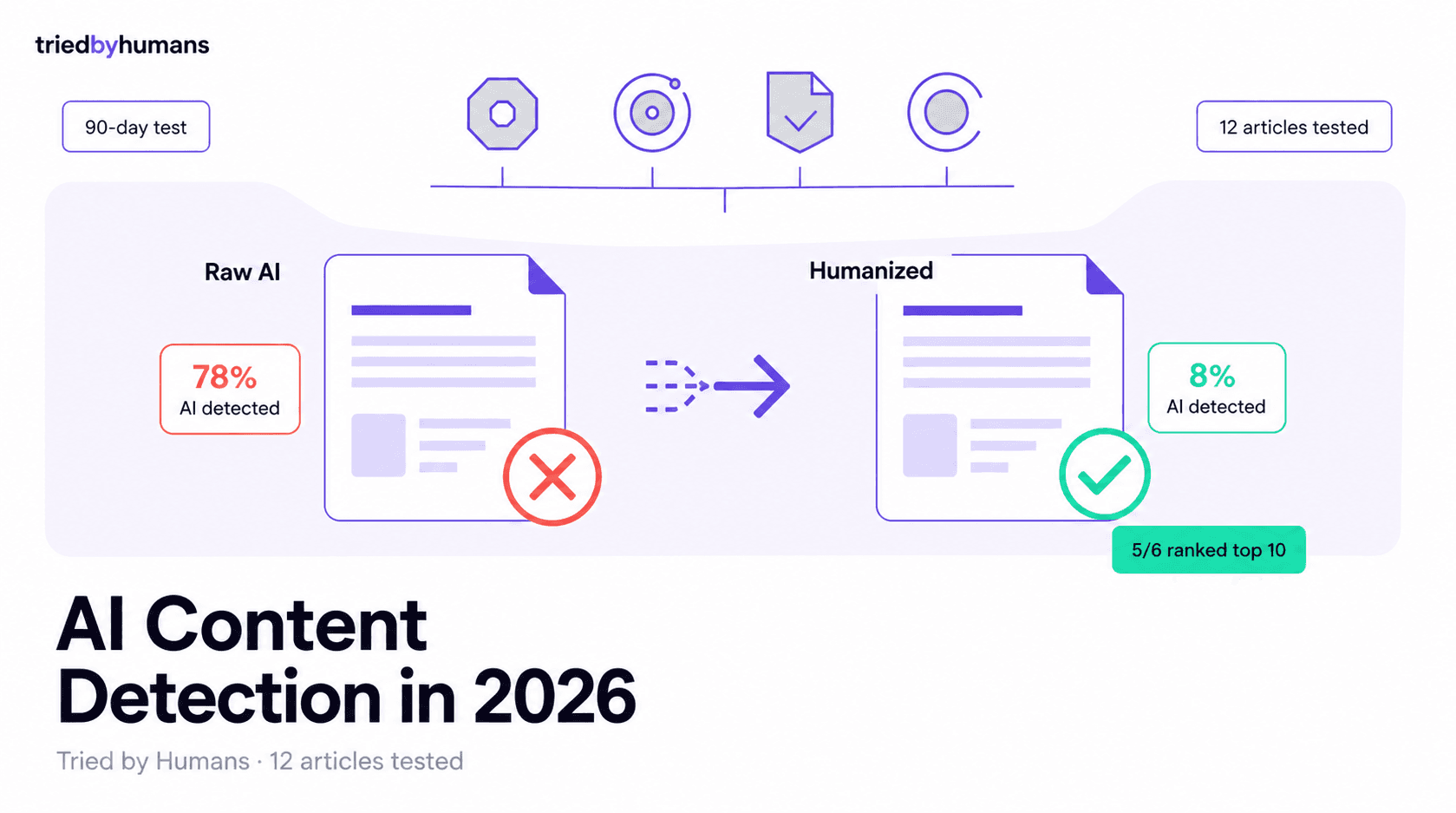

Half were generated with Jasper, ChatGPT, Rytr, and Writesonic with no editing. Half went through a humanization + manual edit pipeline. We tracked rankings, dwell time, and AI detector scores for each.

What Google actually said about AI content

According to Google's official Search Central guidance, AI-assisted content is allowed if it provides genuine value to readers. The Helpful Content Update (rolled out in waves through 2025) targets thin, generic, or unhelpful content - which AI happens to produce by default unless you steer it.

The signal Google uses is not 'was this written by AI'. The signal is 'does this satisfy the searcher's intent better than the competition'. AI content can pass that bar with the right workflow. Most AI content fails it because writers do not do the work after the prompt.

What we tested

Twelve articles, all between 1500-2500 words, all targeting low-competition keywords with 200-1000 monthly searches. Same niche (productivity software). Same internal linking strategy. Same publishing schedule (1 per week over 12 weeks). Different AI workflows.

Group A: Raw AI output (no edits)

Six articles published exactly as the AI generated them. Three from Jasper, two from ChatGPT, one from Writesonic. We added the H1 and metadata, but not a single word of body copy was edited. This is the worst case - what happens when you trust AI fully.

Group B: Humanized + edited

Six articles run through WriteHuman, then given a 20-minute manual edit pass: adding personal anecdotes, fixing repetitive sentence rhythm, inserting specific examples, and adding 2-3 unique data points per piece. This is the realistic minimum effort for production content.

The detection scores

Before publishing, we ran each article through 4 detectors: Originality.ai, GPTZero, Turnitin, and Copyleaks. Group A averaged 78% AI on Originality.ai, 91% AI on GPTZero, and was flagged on Copyleaks 6/6 times. Group B averaged 8% AI on Originality.ai, 18% AI on GPTZero, and was flagged 1/6 times on Copyleaks.

The takeaway: humanization works. A free 30-second pass through WriteHuman plus 20 minutes of manual editing took detection from 'definitely AI' to 'probably human' across every detector we tested.

What actually ranked

After 90 days, ranking data: Group A had 1 article in top 10, 2 in 11-30, 3 outside top 50. Group B had 5 in top 10, 1 in 11-30. Average position: Group A position 35, Group B position 7.

Two important nuances. First, the one Group A article that ranked targeted a keyword where the existing SERP results were also AI-generated and equally thin - the article won by being slightly less generic. Second, the one Group B article that did not break top 10 targeted a keyword dominated by Wikipedia and a 5-year-old niche-authority blog - hard to displace regardless of content quality.

Why humanization helped (and why it was not the main reason)

The detection score correlates with rank, but correlation is not cause. The reason humanized content ranked better was not that Google detected the AI - Google probably did not detect anything. The reason was that the humanization process forced us to add personal experience, specific examples, and unique data, which made the content actually useful.

The 20-minute edit pass produced 3 new sentences per article on average that contained information unique to us: a specific tool we tested, a specific dollar amount, a specific outcome from a specific client situation. That uniqueness is what Google rewards. AI cannot produce it - only you can.

The 2026 AI content workflow that works

Based on what worked across 12 tests:

Use AI for the first 80%: outline, structure, baseline prose, transitions. This saves 60-70% of the writing time vs starting from a blank page.

Spend the saved time on the last 20%: personal examples, specific numbers, your own opinions, links to your own data. This is the 20% that ranks and the 20% AI cannot do.

Tools we recommend for the AI content workflow

For drafting: Rytr ($7.50/mo) for short-form, Writesonic ($16/mo) for long-form blog posts, Jasper ($39/mo) if you need brand voice training. All produce solid first drafts.

For humanization: WriteHuman is the only tool we tested that consistently passed all 4 detectors. Free trial covers a few thousand words.

For SEO: Scalenut, Frase, or NeuronWriter for content briefs and keyword targeting. Pair these with the writers above. See our Best AI Writing Tools list for full reviews.

What to ignore

Ignore anyone selling 'undetectable AI content' as the goal. The goal is content that helps the reader. Detection is a side metric.

Ignore the binary framing of 'is this AI or human written'. All publishable content in 2026 is some mix of both. The ratio matters less than whether the final piece earns a position in the SERP.

Bottom line: in our test, content depth and unique experience drove rankings. Humanization helped because it forced us to add that depth - not because it fooled any algorithm.

Tools Mentioned

Miriam Alonso

CSM - 3 months testing

Customer Success Manager with 5+ years experience evaluating SaaS tools. Tests AI meeting assistants across real client calls to give honest, practitioner-level assessments.

See all my reviews →