What Is AI Video Generation

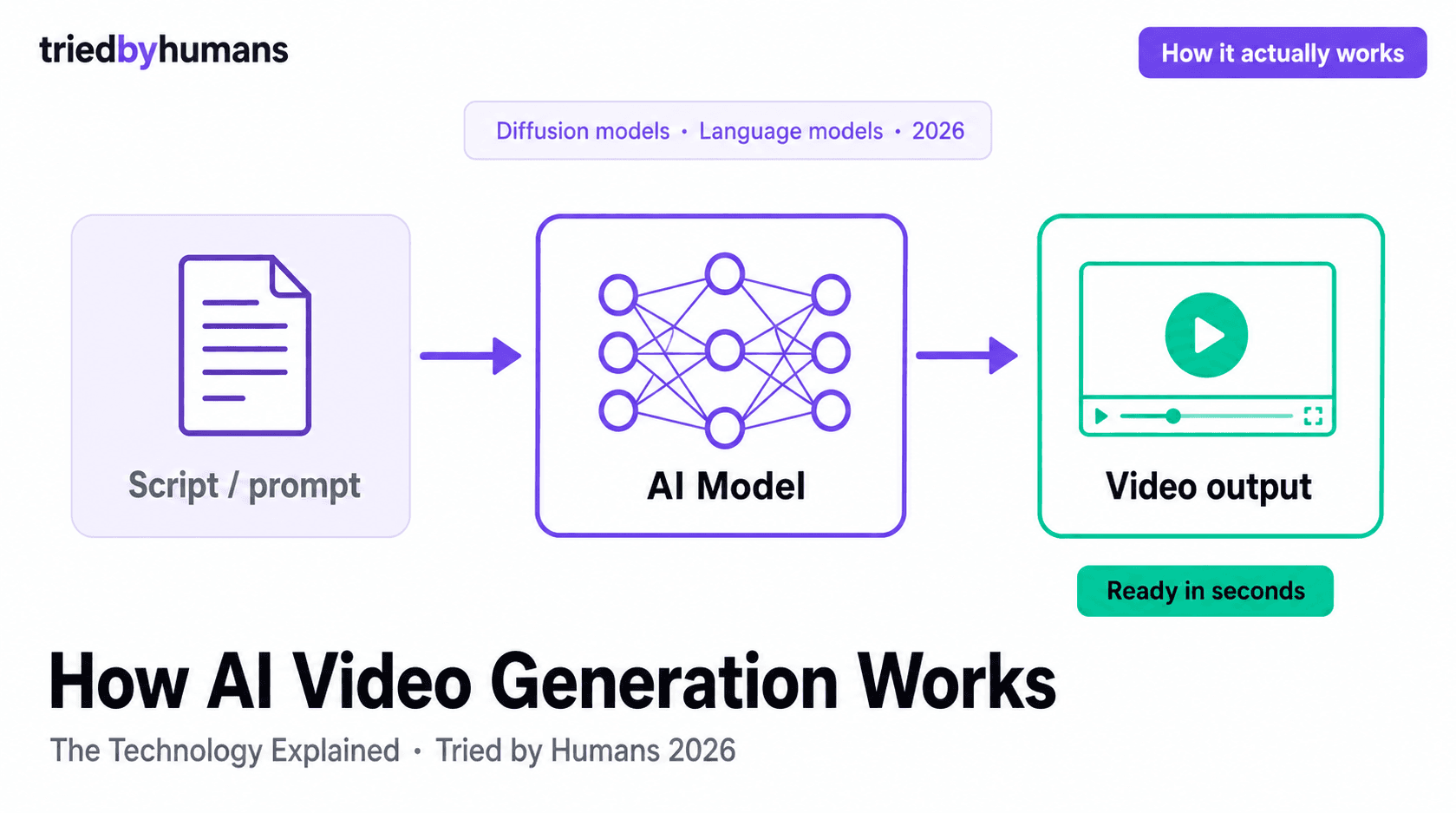

AI video generation refers to any process where artificial intelligence creates video content from inputs that are not themselves video — most commonly text, images, or audio. The term covers several distinct technologies that are sometimes conflated: text-to-video diffusion models (which generate novel video from a text description), avatar synthesis tools (which animate a digital human to deliver a script), and AI video enhancement tools (which use AI to add captions, reframe footage, or extract clips from existing video). According to Wyzowl's 2025 Video Marketing Statistics, 89% of people say watching a video has convinced them to buy a product or service — and AI video generation is the production method that makes video volume commercially viable for most teams. For a practical look at which tools lead the category, see our best AI text-to-video generators guide.

Each category uses different underlying AI approaches and produces different types of output. A text-to-video model produces generative footage — video that did not exist before the model created it. An avatar tool renders a pre-built digital human delivering your script. A clip extraction tool like Opus Clip uses natural language processing and computer vision to identify moments in existing footage that are likely to perform well on social media. These are fundamentally different technologies that happen to share the label 'AI video.'

Text-to-Video: How It Works

Text-to-video models are the most technically complex category. They use diffusion — a process where the model learns to reverse the process of adding random noise to an image, then applies that learned process to generate new images from a text description. Video generation extends this to sequences of frames that are temporally consistent, meaning adjacent frames look like the same scene rather than a random collection of images.

The practical result is that you can write a prompt like 'a marketing team reviewing a presentation in a modern office' and the model generates several seconds of video matching that description. Current models are good at short clips (4–8 seconds), abstract or cinematic content, and visual styles they have seen frequently in training data. They struggle with text that appears in the video, precise object manipulation, and coherent long-form narratives. Tools like Fliki use text-to-video generation to source stock-like clips that match a script's content, rather than generating every frame from scratch — a hybrid approach that delivers more predictable quality. Learn the workflow step by step in our guide on how to create AI video from text.

Traditional video CGI requires manually modeling objects, lighting, and motion. Diffusion models learn statistical patterns from billions of image-video pairs and generate new content by sampling from those patterns. This makes generation fast and flexible, but also unpredictable — the model may produce a photorealistic office scene or an uncanny distortion depending on how well the prompt matches its training distribution.

AI Avatar Video: The Technology

Avatar video tools like Synthesia take a different technical approach than generative text-to-video models. Rather than generating video from scratch on every request, avatar tools use pre-rendered neural representations of digital humans that are animated in real-time by a driving signal — typically the audio track derived from your script. The model learns the mapping between phoneme sequences (units of speech sound) and the corresponding facial muscle movements and lip positions of the avatar. For a hands-on look at the leading avatar tools, see our best AI avatar video generators roundup.

In our testing, the quality difference between avatar tools comes down almost entirely to the quality of this phoneme-to-face mapping. Lower-quality tools produce avatars whose lip movements are correct but mechanical — the mouth shapes are right, but the surrounding facial expressions and head movements do not change naturally. Synthesia's neural avatar rendering produces coordinated movement across the whole face and head, which is why its avatars score higher on perceived realism. The avatar itself is a fixed visual asset; what changes is the animation driving it.

Custom avatar creation — where you upload a video of yourself and the tool creates a digital avatar in your likeness — adds another layer: identity transfer. The system must learn not just the geometry of your face but also your characteristic speaking patterns, head tilt tendencies, and expression range. This is technically more demanding than animating a generic pre-built avatar, which is why custom avatars are enterprise-tier features on platforms like Synthesia.

AI Voice Synthesis

AI voice synthesis — also called text-to-speech (TTS) — is the technology that converts written script text into spoken audio. Modern TTS systems use neural networks trained on hours of human speech to produce voices that are natural-sounding, with appropriate pacing, intonation, and prosody. The best current TTS voices are indistinguishable from human narration to most listeners in casual listening conditions.

Tools like Fliki are built primarily around TTS: the video is an accompaniment to the AI voice, which is the primary content delivery mechanism. Fliki's voice library covers 2,000+ AI voices across 75+ languages, each trained on a different voice actor or language corpus. Voice quality varies by language: English, Spanish, French, and German voices have the most training data and sound most natural. Less common languages may have more limited voice options with less natural prosody.

Voice cloning is an extension of TTS that creates a custom voice model from a sample of a specific person's speech. Several enterprise AI video platforms offer voice cloning alongside avatar cloning, so an organization can create a fully digital version of a spokesperson for use in marketing and training content. In our testing, voice cloning quality is impressive for controlled reading but degrades on content that deviates significantly from the style of the training sample.

Standard TTS voices (like those in Fliki's library of 2,000+ voices) are the right choice for most content marketing, training, and social video. They are fast to generate, require no setup, and cover a wide range of languages. Voice cloning makes sense when brand voice consistency requires a specific spokesperson's voice — typically a CEO, celebrity, or brand character — and the volume of content justifies the setup cost of creating and maintaining the cloned voice model.

Where AI Video Generation Is Heading

The trajectory of AI video in 2026 is toward higher coherence, longer clips, and real-time generation. Diffusion model improvements are extending the practical clip length from 4–8 seconds toward 30+ seconds while maintaining temporal consistency. Avatar tools are adding more expressive emotion ranges and gesture libraries. Voice synthesis is approaching the quality threshold where blind listening tests cannot distinguish AI from human at above-chance rates for any language.

According to G2's AI Video Generation category data, user satisfaction scores for AI video tools have increased 18% year-over-year as output quality has improved and workflows have become more intuitive. The next major threshold is real-time generation: tools that produce video as fast as the user types, enabling live AI presenter applications for webinars, virtual events, and customer service. Several platforms have early versions of this capability in beta. The gap between what AI video can produce and what traditional video production requires continues to close — the question for practitioners is not whether to adopt AI video, but which tools to deploy first.

Recommended tools

Miriam Alonso

CSM - 3 months testing

Customer Success Manager with 5+ years experience evaluating SaaS tools. Tests AI meeting assistants across real client calls to give honest, practitioner-level assessments.

See all my reviews →